What is Promise Theory for governing autonomous agents

Introduction

Agentic AI is the most exciting shift in enterprise software in decades. Instead of AI that answers questions, you now have AI that acts: autonomously analyzing, deciding, and executing across complex systems.

But autonomy without governance is just chaos with a good interface. The hardest question in agentic AI isn’t “can the agent do the task?” It’s “how do I know the agent will do the right task, in the right way, without needing a human to check its work?”

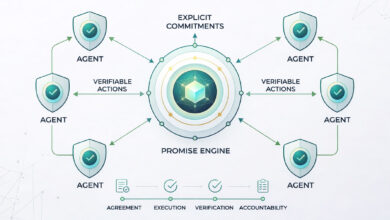

That question: how do autonomous agents make reliable, verifiable commitments in a multi-agent world is the exact problem Promise Theory was designed to solve.

Origin and Core Idea of Promise Theory

Promise Theory was developed by computer scientist Mark Burgess in the early 2000s, originally to model how distributed computing systems coordinate without central control. It was the theoretical foundation for CFEngine, one of the earliest infrastructure automation tools.

The central claim of Promise Theory: agents can only make promises on behalf of themselves. No agent can promise what another agent will do, only what it will do given certain conditions.

This sounds simple. But it’s a profound architectural shift: it moves coordination from a centralized “command and control” model to a decentralized “commitment and verify” model.

Key principle to call out visually:

An agent’s promise is a voluntary declaration of intent not an obligation imposed from outside. It’s what the agent can guarantee about its own behavior.”

This is why Promise Theory maps so naturally onto agentic AI because modern AI agents are, by design, autonomous actors that need to coordinate without a central brain dictating every move.

From Alert Fatigue to Autonomous Ops: AI in Action

The Three Hard Problems Promise Theory Solves In Agentic AI

Problem 1: Agent Coordination Without Central Control

- In a multi-agent system, agents need to work together without a bottleneck orchestrator making every decision. But without a coordination model, agents act on conflicting assumptions.

- Promise Theory solves this by making each agent’s intent explicit and visible to the system. Agents don’t just act, they declare what they’re promising to do, so other agents can plan around those commitments rather than conflicting with them.

Problem 2: Hallucination and Unauthorized Action

- The biggest enterprise fear about agentic AI is an agent taking an action nobody authorized whether due to hallucination, bad context, or misaligned goals.

- Promise Theory prevents this architecturally: an agent cannot promise to act outside its declared scope. Before execution, the promise is validated against the agent’s defined policy. If conditions aren’t met, the promise isn’t kept and the system knows why.

Problem 3: Accountability and Auditability

- When a multi-agent system produces an outcome good or bad, who is responsible? In most agentic architectures, this is unanswerable.

- Promise Theory creates a full lineage: every action traces back to a specific agent, a specific promise, the data that triggered it, and the policy version active at the time. Accountability isn’t retroactive, it’s built in.

How Promise Theory Differs From Rules-Based And Obligation-Based Models

| Rules-Based | Obligation-Based | Promise Theory | |

| Control Type | External | Imposed | Self-declared |

| Failure Mode | Freezes or acts unpredictably | Silent failure | Doesn’t promise what it can’t deliver |

| Scalability | Brittle at scale | Enforcement breaks down | Scales linearly |

| Agent Honesty | Not guaranteed | Not guaranteed | Built in |

Promise Theory In A Production Agentic Architecture: Scout-itAI

Scout-itAI is the only enterprise platform that has built Promise Theory directly into its agentic architecture not as a design principle, but as a governing mechanism that runs on every agent action in production.

The platform deploys 8 specialized autonomous agents, each making explicit promises within their defined operational domain. No agent can promise outside its scope. No agent can commit to what another agent controls.

Let’s take a look at how these agents use Promise Theory in action.As an Three agents as concrete examples of Promise Theory in action: The Predictor promises to forecast reliability impact only when it has statistically sufficient historical data (100,000 Monte Carlo simulations). If data quality falls below threshold, it doesn’t forecast it flags the gap instead of hallucinating a confident answer. The Drifter promises to flag configuration drift only when deviation crosses a validated statistical threshold. It won’t raise a false alarm to appear useful; it commits to accuracy over volume. The Critic promises to evaluate every other agent’s actions against ISO 42001 governance standards and surface a real-time trust score. It acts as the promise-keeper of the system holding all other agents accountable.

The AI² Integrity Layer sits above all agents and validates every promise before execution ensuring no agent acts outside its declared commitment. This is Promise Theory operationalized at enterprise scale.

Why This Matters For The Future Of Agentic AI

As agentic AI systems grow in complexity, more agents, more domains, more autonomy the governance problem doesn’t get easier, it compounds. An ungoverned system of 5 agents is manageable. An ungoverned system of 500 is a liability.

Promise Theory scales elegantly because governance is decentralized and agent-local. Each agent governs itself. The system doesn’t need a smarter orchestrator, it needs principled agents.

The emerging ISO 42001 standard for AI management systems is effectively demanding what Promise Theory already provides: documented intent, verifiable actions, traceable decisions, and accountable agents. Organizations that build on Promise Theory now will have a significant head start on compliance as these standards become mandatory.

The question for any organization building or deploying agentic AI isn’t whether they need a governance model. It’s whether they’ll build one reactively or design it in from the start.

Conclusion

Promise Theory reframes what it means to trust an autonomous agent. Trust is no longer about hoping an AI will “do the right thing,” but about having a verifiable architectural guarantee that it will only act within the promises it is designed to make and keep. For organizations deploying agentic AI at scale, Promise Theory isn’t an academic abstraction; it’s the dividing line between automation that compounds risk and automation that compounds reliability.

Scout-itAI is the first solution of its kind to operationalize Promise Theory in enterprise environments where the stakes are real public safety infrastructure, healthcare systems, and global retail operations. The result is an agentic architecture that is not only powerful, but provably trustworthy by design.

See Scout-itAI’s Promise Engine in action and watch governed agents solve problems your current tools can’t explain. Book a demo

Frequently Asked Questions

Promise Theory is a scientific framework where autonomous agents coordinate by making voluntary, self-declared commitments rather than following orders from a central authority. Each agent promises only what it can genuinely deliver, within its own defined scope. This makes multi-agent systems transparent, resilient, and trustworthy by design.

Promise Theory was developed by computer scientist Mark Burgess in the early 2000s to model how distributed systems coordinate without central control. It became the foundation for CFEngine, one of the earliest infrastructure automation tools. Today its principles map directly onto modern agentic AI architecture.

Rules-based systems tell agents what to do through external logic, but often break brittle when situations fall outside predefined rules. Promise Theory is voluntary: agents declare what they will do based on their own capabilities, and if they can’t fulfill a promise, they simply don’t make one. This makes Promise Theory far more honest and resilient at scale.

Without governance, agents can take unauthorized actions, conflict with each other, or make decisions nobody can trace or explain. Promise Theory ensures every agent action is validated against a verifiable commitment before it executes. This creates full accountability without requiring a central controller managing every decision.

In agentic AI, hallucinations aren’t just wrong text, they’re unauthorized real-world actions. Promise Theory prevents this architecturally: an agent cannot act outside its declared scope, and if conditions to fulfill a promise aren’t met, it simply doesn’t act. Unauthorized actions become structurally impossible.

An SLA is an external agreement with enforcement from outside. A promise is entirely voluntary and self-declared agents only commit to what they can genuinely control. This honesty is what makes Promise Theory more reliable in distributed systems than obligation-based models.

Yes because each agent governs itself, governance scales linearly with agent count rather than creating bottlenecks at a central authority. Adding more agents simply adds more self-governing units, not more governance complexity. This is why Promise Theory is uniquely suited to enterprise-scale agentic deployments.

ISO 42001 requires documented AI intent, verifiable decisions, traceable outcomes, and continuous improvement all of which Promise Theory provides natively. Organizations building on Promise Theory have a structural head start on compliance versus those using black-box AI systems. Scout-itAI’s AI² Integrity Layer is built to satisfy ISO 42001 by default.

The AI² Integrity Layer is Scout-itAI’s governing architecture that validates every agent promise before execution across its 8-agent fleet. It maintains full metadata lineage for every decision and calculates real-time trust scores through The Critic agent. It’s Promise Theory operationalized at enterprise scale.

No. Promise Theory applies to any multi-agent system where autonomous agents need to coordinate without centralized control. Scout-itAI applies it to IT reliability, but it’s equally relevant to healthcare, finance, supply chain, and any domain where AI agents take real-world actions requiring accountability. IT operations is simply where Scout-itAI has proven it works at enterprise scale.

Tony Davis

Director of Agentic Solutions & Compliance