Promise Theory for agent coordination and accountability

Introduction

You know the feeling when you’ve got an AI system running in your enterprise, but you really have no idea what it’s doing? The dashboard lights up, the agents spring into action, and sometimes things get fixed – but most of the time, you still can’t quite work out what happened, or why.

And that’s the problem: AI systems are supposed to be autonomous, but most people don’t really want to give them the kind of authority that they need to operate effectively, because they just don’t trust them to do the right thing.

Promise Theory is the game-changer that’s about to change all that.

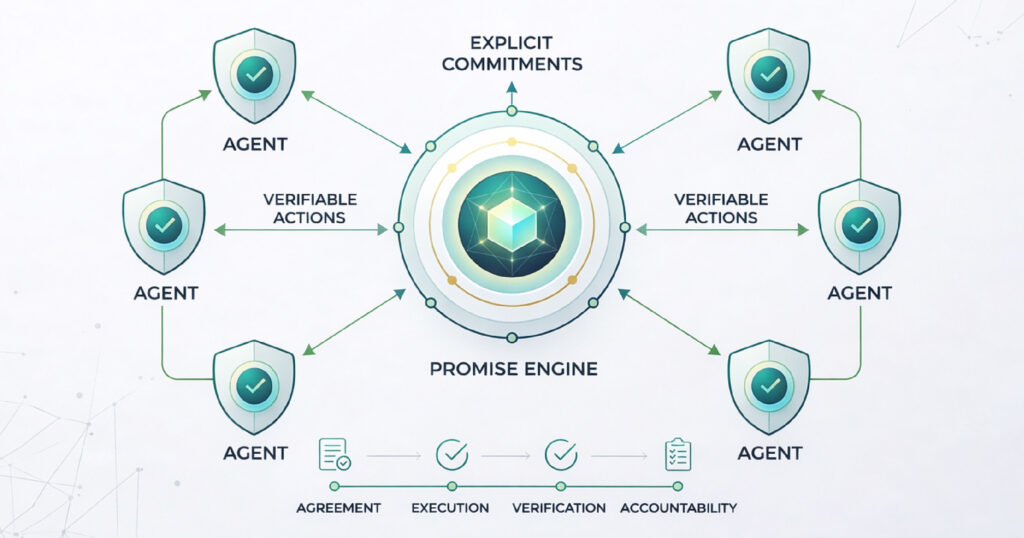

It’s the scientific backbone behind Scout-itAI’s Promise Engine and it’s possibly the most important concept in enterprise AI governance that nobody really talks about yet.

In this blog, we’re going to break down what Promise Theory is, why it’s so important for agent coordination, and how it’s going to solve the accountability gap that’s been plaguing modern agentic AI.

What Is Promise Theory?

Promise Theory is a formal scientific model that was originally developed by computer scientist Mark Burgess to figure out how autonomous agents can work together effectively. The basic idea is pretty simple: instead of being given orders, agents make promises.

So, in a traditional command-and-control system, a central controller gives orders to each and every node. But that creates a whole lot of problems – if the controller fails, or makes a bad decision, the whole system can come crashing down.

Promise Theory flips this model on its head. Here’s how it works:

- Each agent in a distributed system declares what it promises to deliver

- Agents operate independently, within their own domain

- They coordinate with each other through explicit, verifiable commitments

And it’s not just some abstract theory – it’s the actual design principle behind CFEngine (a configuration management system), and now it’s the foundation of how Scout-itAI governs AI agents in enterprise environments.

How Promise Theory Eliminates Black-Box Decisions, Drift, and Coordination Failures

The Problem Promise Theory Solves: Agent Accountability in Agentic AI

These days, most agentic AI platforms are made up of lots of specialized agents: they’re predicting failures, detecting drift, correlating events, and all sorts of other things. But here’s the

thing: it’s not that hard to get them to act – the real challenge is getting them to act in a way that we can verify, and trust.

When there’s no formal accountability model in place, modern agentic AI creates a whole range of serious risks – from mystery actions to operational outages.

| Risk | What It Looks Like | Business Impact |

| Black-box decisions | Agent acts but no one can explain why | Compliance failure, distrust |

| Agent drift | Behavior slowly deviates from intended scope | Silent reliability erosion |

| Hallucinated actions | AI executes unauthorized or incorrect changes | Outages, security incidents |

| Coordination failures | Agents work at cross-purposes | Cascading system failures |

Promise Theory directly addresses all of these by making agent intent explicit, binding, and measurable – before any action is even taken.

How Promise Theory Powers Agent Coordination

1. Commitments Over Assumptions

Each agent publishes what it’s going to do, not what it might do. So a network monitoring agent doesn’t just ‘try to detect anomalies’ – it makes a verifiable promise to detect specific categories of variance within a certain confidence threshold. This gets rid of all the ambiguity in multi-agent workflows.

2. Decentralised Cooperation With Auditable Lineage

Because agents coordinate through promises, rather than central commands, there’s no single point of failure. And more importantly, every decision can be traced back to a specific promise made by a specific agent, at a specific time. This is what makes agentic AI auditable.

3. Drift Detection as a First-Class Function

When an agent’s behaviour starts to deviate from its promise, the system flags it straight away. This is what Scout-itAI calls promise drift – and detecting it early is the difference between a minor adjustment and a major outage.

Learn more about how Scout-itAI structures agent coordination in the Promise Theory Engine overview.

The Scout-itAI AI² Integrity Layer: Promise Theory in Action

Scout-itAI built its AI² (AI Integrity and Intelligence) layer directly on top of Promise Theory principles. Here’s how the Promise Engine works in real life:

- Every agent evaluates variance, reliability and trust signals before acting

- Actions are validated against the initial promise – not just executed blindly

- Decision lineage is captured end-to-end, enabling full traceability

- Confidence indicators give real-time scoring of agent certainty before execution

- ISO 42001 alignment ensures governance is baked into the core, not bolted on

‘Stop guessing if your AI is working. Start verifying with Promise Theory.’ – Scout-itAI

This architecture means every Scout-itAI deployment delivers what most enterprise AI platforms only promise: explainable decisions, compliant by design, and zero tolerance for unauthorized actions.

Explore the full platform architecture on the AI-Powered Insights page.

Promise Theory vs. Traditional Monitoring: A Direct Comparison

| Capability | Traditional Monitoring | Promise Theory (Scout-itAI) |

| Decision transparency | Limited or none | Full decision lineage |

| Agent accountability | Implicit, often absent | Explicit, verifiable promises |

| Drift detection | Manual or reactive | Autonomous, real-time |

| Failure handling | Central-point dependency | Decentralized resilience |

| Governance model | Audit after the fact | Compliant by design |

| AI hallucination risk | High in agentic workflows | Blocked by promise validation |

Why Enterprise IT Needs Promise-Based Agent Accountability Now

Gartner predicts that by 2028, at least 15% of day-to-day work decisions will be made autonomously through agentic AI, up from 0% in 2024. That shift makes agent accountability an operational priority for enterprise IT teams.

Three forces are driving the urgency:

- AI governance standards are maturing. ISO/IEC 42001 gives organizations a formal framework for managing AI responsibly, with emphasis on accountability, transparency, and risk management.

- Agentic AI increases coordination risk. As autonomous systems begin making more decisions, enterprises need stronger ways to define scope, verify actions, and trace outcomes. This is where conventional monitoring alone may be insufficient.

- Regulated environments demand stronger controls. In sectors such as healthcare, finance, and critical infrastructure, organizations increasingly need evidence that AI-supported decisions can be governed, reviewed, and explained.

Promise Theory offers a useful framework for this challenge by treating agents as autonomous actors that make explicit commitments within defined scope. In practice, that can support more accountable and governable AI workflows when combined with policy, validation, and audit controls.

See how Scout-itAI applies this approach to governed AI workflows on the SRE Solutions page.

Meet the Scout-itAI Agents Governed by Promise Theory

Scout-itAI’s AI agents all operate with a rock solid promise framework:

- The Predictor gives you 48 hour failure forecasts, and verifiably tells you how confident they are.

- The Drifter is on the lookout for drift in your configuration over the long term

- The Prophet gives you health predictions for your path, along with some helpful backup routing ideas

- The Blender takes disparate data sources – logs, metrics, traces – and turns them into something actionable

- The Critic is always checking the ISO 42001 compliance and AI² trust score of each of our other agents

- The Bishop makes sure the agents are working together, and escalating the right things to the right people with full audit trails

No AI agent is just winging it – every action is just the execution of a verified promise

Conclusion

You’ll never get the level of trust you need to deploy agentic AI at scale without being able to explain how it makes its decisions. Promise Theory is no philosophical theory – it’s the engineering design that lets you build verifiable, accountable AI

Scout-itAI is the only platform that has built this whole model, from the ground up. Not just as a compliance checkbox to be ticked. Not just as an afterthought. It’s the foundation that everything is built on.

If your organisation is deploying autonomous AI in production and you can’t track every decision back to a verified promise – then you need to change your game.

Book a Demo with scouitai and get a live walkthrough of our Promise Engine see how every single AI decision is made, validated and fully traceable.

Frequently Asked Questions

Promise Theory is a scientific framework where autonomous agents coordinate by declaring explicit commitments about what they will do, rather than being commanded by a central controller. It makes distributed systems more reliable, transparent, and accountable.

In agentic AI, Promise Theory means each AI agent makes a verifiable promise before acting — committing to specific behaviors within defined confidence limits. This eliminates guesswork and makes AI decisions auditable.

AI agent accountability is the ability to trace any autonomous AI decision back to the specific logic, data, and policy that produced it. It ensures that every action taken by an AI agent can be explained, verified, and governed.

Agentic AI governance is the set of policies, frameworks, and technical controls that ensure AI agents operate within defined boundaries, comply with regulations, and produce explainable decisions. Promise Theory provides the architectural foundation for true governance.

Scout-itAI builds its AI² Integrity Layer on Promise Theory. Every agent validates its promises before executing actions, and full decision lineage is captured for traceability. The platform is aligned with ISO 42001 standards for AI management.

Agent drift occurs when an AI agent’s behavior gradually deviates from its intended purpose or promised behavior — often subtly enough to go undetected until it causes a problem. Scout-itAI’s Promise Engine detects drift in real time.

The AI black box problem refers to the inability to explain why an AI made a specific decision. It is a critical barrier to enterprise adoption because it makes AI ungovernable, non-compliant, and difficult to trust. Promise Theory solves this with full decision lineage.

ISO 42001 is the international standard for AI management systems. It provides a framework for organizations to develop, deploy, and govern AI responsibly, with requirements for transparency, accountability, and continuous monitoring. Scout-itAI is aligned with this standard.

Traditional monitoring detects issues after they occur. Promise Theory validates agent intent before any action is taken. This shifts the governance model from reactive to proactive, eliminating entire categories of risk before they can impact production systems.

Yes. Scout-itAI’s Promise Engine is designed to manage 10,000+ agents under a unified integrity policy. Because each agent operates on its own promise framework, scaling does not introduce coordination bottlenecks or governance gaps.

Tony Davis

Director of Agentic Solutions & Compliance