Promise Theory for Multi-Agent AI systems

Introduction

Agentic AI is really starting to take off, and things are getting a lot more interesting. What started as just a single copilot is quickly turning into tiny “teams” of agents that work together, plan stuff out, gather information, use tools and generally get work done to its completion. But the minute you add more than one agent to the mix things start to get complicated – even with guardrails and permissions in place, you still find yourself wondering: “what exactly is this agent going to do, and how do I know it made the right decision?”

If you’ve got multiple agents working together, they’re liable to step on each other’s toes, make conflicting calls or start doing things you hadn’t planned for as the tools, prompts and context change over time. Even with all the rules and permissions you can think of, there’s still a major question mark hanging over everything: how am I going to keep track of what all these agents are doing?

That’s why promise theory for AI agents matters so much. It’s a pretty straightforward way to design multi-agent systems where responsibilities are clear, boundaries are sensible and there’s built in proof so your agentic AI stays predictable, testable and trustworthy as it scales.

Promise Theory, Explained

Promise Theory looks at a system as a group of independent actors that work together by making explicit and very clear – promises about their behaviour.

In even plainer terms, an agent should be able to say:

- what it’s going to do

- what it won’t do

- what it needs

- and how it’s going to show its work

That’s why Promise Theory fits so well with agentic AI. Agents don’t behave like traditional software components – they make decisions based on incomplete information, rely on tools that can change, interact with other agents, and sometimes they take the initiative in ways you just can’t predict.

So instead of trying to pretend you can “control” agents the way you control a deterministic program, Promise theory starts from a much more realistic place: autonomy is a real thing, so coordination has got to be designed.

If you want the original explanation in plain terms Mark Burgess’s Promise Theory overview is a great starting point.

A Structured Framework for Applying Promise Theory to Multi-Agent Systems? Book a Free Demo with Scout-itAI →

The key idea: promises are all about local control

The important bit is this: an agent can only promise outcomes it has actual control over. What an agent can promise is its own behaviour. That’s why Promise Theory nudges you away from vague promises like “I will sort it out”, and towards promises that are actually testable – like

- “I will use approved sources and cite them”.

- “I will not take any irreversible actions without authorisation.”

- “If the certainty level is low, I will stop and escalate.”

You can see what they all have in common: they are clear, they are bounded and they are verifiable. That’s exactly the point of Promise Theory.

Why rules, access controls and permissions aren’t enough when you’re building multi-agent systems

Most teams start off in a pretty good spot: limit what agents can do and set some rules about what they should do.

So you put in permission controls (what an agent can access) and policies (what an agent is allowed to do) – and you still need those – but as soon as you start building multi-agent workflows you hit a gap. Permissions and policies don’t fully address the issue of coordination.

A useful distinction to make is that:

- permissions answer: can this agent do it?

- policies answer: is this agent allowed to do it?

- promises answer: what will this agent do, under what conditions – and what proof will it provide?

Yeah – rules and access controls do help to reduce risk. But promises are what create accountability. And in multi-agent systems it’s accountability that keeps things from falling apart.

In fact, frameworks like the NIST AI Risk Management Framework (AI RMF) emphasize that trustworthy AI depends on governance, measurement, and risk controls—not just intentions.

The Real Multi-Agent Problem: Autonomy vs Accountability

When you move from “one agent” to “many agents”, you stop building a feature and start building a system. And systems tend to fail in the same handful of ways.

For example:

- Overlap: Two agents are doing the same work because nobody knows who’s in charge.

- Mismatch: One agent thinks a tool behaves one way, another agent uses it a different way.

- Momentum: An agent just keeps on going, even though the evidence isn’t strong.

- Opacity: After the event, nobody can really say why the system acted the way it did.

At that point, the problem isn’t that the agents aren’t capable – the problem is that the system just doesn’t have a clear way to lay out responsibilities, boundaries and proof. That’s exactly what Promise Theory gives you.

The Promise Template: The foundation for every AI agent’s promise

To get agents that behave consistently, every role needs a promise profile – something that other agents (and humans) can rely on. Here’s a down-to-earth template that works across lots of different architectures.

1) Capability promise: this is what I’m capable of

Start with the agent’s declared abilities. What tools does it have access to? What data does it have access to – and where does it get that data from?

- What actions can it perform – and what does it plan on doing with them?

Example: “I can grab and summarise whatever approved sources I can find.”

2) Boundary promise: where I stop, and don’t get ahead of myself

Next you want to be super clear about where the agent stops. This is what keeps it from getting too big for its britches:

- What tools is it not allowed to use?

- What actions does it need to steer clear of?

- What environments is it not allowed to do its thing in?

Example: “I won’t write to files or call external services outside of the approved list of tools I’ve got access to.”

3) Dependency promise: what I need to do my thing

This is where most agents go wrong – we ask them to act without the right gear. So be clear about what inputs they need to do their job:

- What data do they need to get the job done?

- What’s the shape of data they can work with?

- When and how do they need approval to act?

Example: “I need the latest schema and an approval token for any irreversible changes I make.”

4) Evidence promise: how I show you I got it right

You can see the evidence it compiled, what data it used and why it came to that conclusion. That’s exactly what Promise Theory gives youTrust is built not just by being confident but by showing your work – and your work is what proves your results

- Where are you getting your facts from? Show me the sources you used.

- What tools were you using, and can you show me the records of that?

- What logs and diffs do you have to show what happened?

- Can you leave a clear record of what you did?

Example: “Every time I reach a conclusion I list out the sources I looked at and leave a trail of everything I used so that’s all there in the record.”

5) Quality promise: what we mean by success

A promise is only good if you can actually check if you’re keeping it – so be clear about what that means in your work. Be clear about the level of certainty that’s good enough to count as an answer:

- What do you count as a good enough answer? Where do you draw the line?

- What do you do when you’re not 100% sure? What are the options?

- What are the conditions under which you stop trying to find an answer and just say “I don’t know”?

Example: “If I’m not 90% certain of something I’ll switch to giving you a range of options – something that says ‘I think this is probably the right answer but I’m not 100%'”.

6) Escalation promise: when do you admit you don’t know

Finally – you need to know when you stop and ask for help. A good system knows when to push its luck and when to pull back

- What makes you go “oh no I need a human to help me out now”

- Who gets the handoff when that happens and what do they get handed?

- What do you include in that handoff so it doesn’t fall apart at the seams?

Example: “If I get stuck or the evidence is conflicting I escalate to a human and I pass on every single option and trade-off I’ve thought of so they don’t have to start from scratch”

Rule of thumb: if you can’t test the promise, you can’t trust it so make sure you’re putting it to the test.

How promises make agent teams more reliable in practice

Promise Theory is especially useful when it comes to agent teams – it doesn’t just tell you what one agent can do, it helps all of them work together without any problems. Here’s a simple way to think about it:

- Agents just publish what they can do (what they’ve promised) and what they can’t

- A coordinator then assigns tasks to the right people rather than just the ones who say they can do it

- You get execution results and a trail of how you got there

- The system checks the results against what was promised

- And if someone can’t keep their promise they escalate instead of making it up

As a result you get a lot fewer headaches and a lot fewer surprises. You don’t just hope the system will behave, you design it to behave.

The biggest benefit: fewer costly wrong actions

A wrong explanation is annoying but a wrong action is just expensive – and it’s a good idea to keep those two problems separate

- Hallucinated text (wrong output)

- Hallucinated action (wrong action or tool use)

Promise Theory reduces the risk of wrong actions by making execution dependent on:

- Is there enough evidence to make a move?

- If you’re unsure do you escalate or just keep on going?

- If it’s outside your bounds do you just refuse to act?

Now this doesn’t mean the agent is suddenly useless just because it’s not 100% sure – no way – it can still be super helpful. It can say “here are some options”, explain the trade-offs and ask some really good questions. It just can’t “bull in a china shop” its way through the uncertainty and act without thinking about the consequences.

What this looks like in practice with Scout-itAI’s Promise Theory approach

Scout-itAI takes the foundation and principles of Promise Theory and applies it in real life, moving from just a – “what if” idea to practical application.

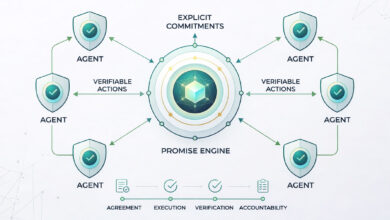

Scout-itAI frames it as a Promise Engine that sits right between what you ask for and the answer

Input → Promise Engine → Output

- You send in what you want and what you’re trying to do.

- The Promise Engine checks all that out: boundaries, dependencies and whether there’s evidence for the job.

- And that’s when the results start coming through: a thumbs up for an action, a safe recommendation or maybe even an escalation.

Scout-itAI makes one particular point: lots of smaller agents, each doing their own thing, is a heck of a lot better than one big ‘jack of all trades’ agent trying to do it all. Look at it like this – roles like coordination, checking in on how things are going, being on the lookout for any changes and forecasting, all show how promise-driven design handles added complexity: smaller agents with clear jobs and limits.

For a formal reference point, the ISO/IEC 42001 AI management system standard outlines how organizations can structure AI governance and accountability.

That’s really the key – the more autonomy the system has, the more it needs to be able to turn those intentions into clear, reliable promises – that’s what promise theory is all about. after all.

Conclusion

Agent systems have some real power to them because they don’t just spit out answers , but actually spring into action – that’s just brilliant. But then you give them free rein with no one to answer to when things go wrong – well that’s just a recipe for complete & utter disaster.

Promise Theory comes in handy because it forces you to nail down some really fundamental things:

- Exactly what the agent is going to do and actually follow through on

- What it’s going to steer clear of altogether

- The kind of evidence it needs to present to back up whatever it says

- And at what point it should just know better than to keep pushing forward

When you get all that sorted, you don’t just end up with some system that sounds nice in theory , you actually end up with one that behaves the way you expect, one you can explain, put to the test, & actually put your trust in.

Want to see what promise-based governance looks like in practice? Explore Scout-itAI’s Promise Theory Engine.

Frequently Asked Questions

Promise Theory is a way to design systems of agents where each agent makes clear, testable commitments about what it will do, what it won’t do, and how it proves results.

Because the minute you have more than one agent, trying to get them to work together becomes the tough bit. Promises help cut down on overlap, stop people from getting confused, and prevent the kind of surprise moves that cause problems.

Permissions decide who gets access – that’s it. Policies are the rules that govern behaviour. Promises, on the other hand, go further – they set out what you can expect someone to do, and what evidence you can trust to prove they followed through.

At the very least, you’re looking for: a clear idea of what capabilities it’s got, its boundaries, what it relies on, what proof it needs to be trusted, quality control checks, and a set of triggers that kick in when things go wrong.

No – but it can stop them from becoming actual actions by making the AI agent go back to the drawing board and say where it got its ideas from when things get uncertain.

By putting a stop to the sorts of things agents can do, by deciding what requires approval and making sure they produce some proof before doing anything at all.

By asking for receipts – printouts of what it did and when, or any other proof – and checking it against the profile of promises it was supposed to keep.

Yeah it can – and it’s especially useful when they start using tools for the first time, because it makes it super clear which tools are allowed, how they should be used – and what evidence they need to hand over.

A Promise Engine is a bit of software that checks the agent’s plans against its limits, its dependencies and what evidence it needs to prove its case – and outputs either an okay to go, or a recommendation that it doesn’t do something.

Start small – promise profiles for key roles, get the AI to produce some evidence, set up a system to escalate things when they go wrong – and then gradually add more agents as you get the hang of it

Tony Davis

Director of Agentic Solutions & Compliance